|

We are not able to use the

redis-trib fix command to fix a cluster when the master and slave for a particular set of slots both go down at the same time.

ERR Not all 16384 slots are covered by nodes. Fixing slots coverage. List of not covered slots: 5460 Slot 5460 has keys in 0 nodes: The folowing uncovered slots have no keys across the cluster: 5460 Fix these slots by covering with a random node? (type 'yes' to accept): yes Covering slot 5460 with 192.168.56.160:7002 再次check. In a minimal Redis Cluster made up of three master nodes, each with a single slave node (to allow minimal failover), each master node is assigned a hash slot range between 0 and 16,383. Node A contains hash slots from 0 to 5000, node B from 5001 to 10000, node C from 10001 to 16383.

redis 3.2.11

redis-cli 4.0.1 redis-trib (redis 3.3.3 gem)

Our use case is we are writing a redis cluster orchestrator, where nodes are added and removed often. When a node goes down, we want slots to be covered as quickly as possible by other masters in the cluster. Also, we are only using redis as a cache right now, so we don't necessarily care that assigning slots to the another master results in data loss.

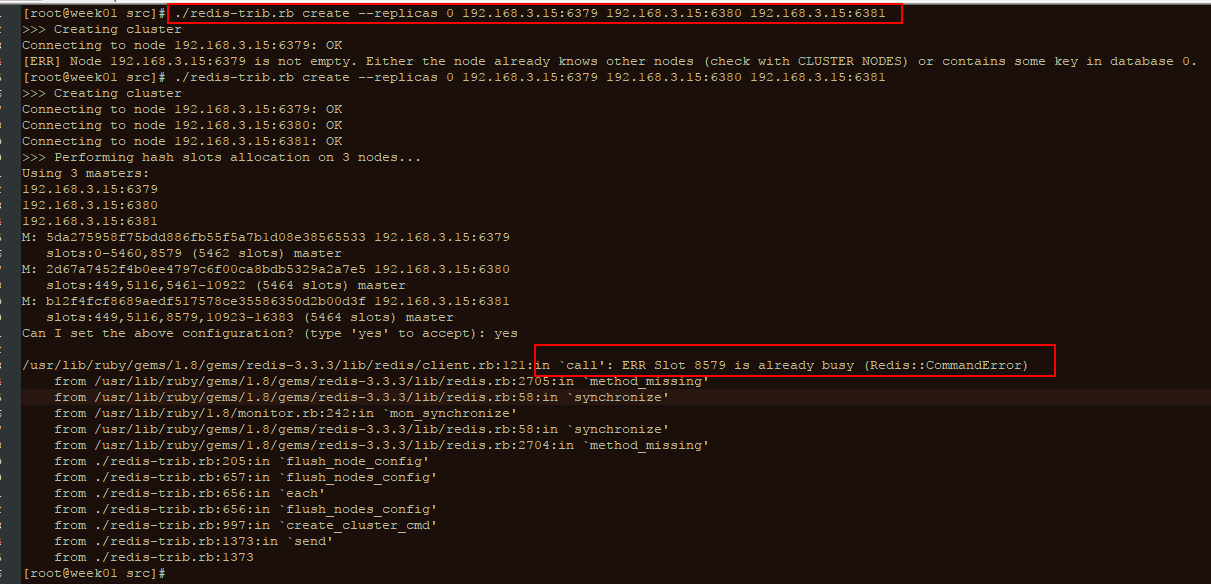

Steps to reproduce

Result:

redis-trib check shows missing slots message (as expected):

What is the recommended way to recover in this situation? Both

rebalance and fix do not work for us. Should we be using addslots to manually assign slots to other masters?

Result of rebalance:

Rebalance is not allowed to run until we fix the cluster.

Output of rebalance:

Fix distributes slots to both master and slave IPs (which surprised us, as we thought it would only use masters). After fix completes, the nodes don't agree about configuration, and gossip does not correct the disagreement, which is an even worse situation for the cluster. The issue is easy for us to reproduce.

Is there an issue with

fix, or is it not correct to attempt to use it in our use case where it is possible that slots will be lost because both the master and its backup slaves will all be lost at once. (We noticed in this issue #3007 (comment) that it was mentioned that fix will not fix this situation anyway). In our use case, we have nodes coming and going often, so we do not always know when a set of slots is going away completely.

Output of fix (truncated):

早些时间公司Redis集群环境的某台机子冗机了,同时还导致了部分slot数据分片丢失;

在用check检查集群运行状态时,遇到错误;

[root@node01 src]# ./redis-trib.rb check172.168.63.202:7000

Connecting to node 172.168.63.202:7000: OK

Connecting to node 172.168.63.203:7000: OK

Connecting to node 172.168.63.201:7000: OK

>>> Performing Cluster Check(using node 172.168.63.202:7000)

M: 449de2d2a4b799ceb858501b5b78ab91504c72e0172.168.63.202:7000

slots: (0 slots) master

0additional replica(s)

M: db9d26b1d15889ad2950382f4f32639606f9a94b172.168.63.203:7000

slots: (0 slots) master

0additional replica(s)

M: f90924f71308eb434038fc8a5f481d3661324792172.168.63.201:7000

slots: (0 slots) master

0additional replica(s)

[OK] All nodes agree about slotsconfiguration.

>>> Check for open slots..

>>> Check slots coverage..

[ERR] Not all 16384 slots are covered by nodes.

原因:

这个往往是由于主node移除了,但是并没有移除node上面的slot,从而导致了slot总数没有达到16384,其实也就是slots分布不正确。所以在删除节点的时候一定要注意删除的是否是Master主节点。

Happy birthday slot machine pictures. 1)、官方是推荐使用redis-trib.rb fix 来修复集群…. …. 通过cluster nodes看到7001这个节点被干掉了… 那么

[root@node01 src]# ./redis-trib.rb fix 172.168.63.201:7001

Redis Err Not All 16384 Slots Are Covered By Nodes In One

修复完成后再用check命令检查下是否正确

Redis Err Not All 16384 Slots Are Covered By Nodes In Children

[root@node01 src]# ./redis-trib.rb check172.168.63.202:7000

只要输入任意集群中节点即可,会自动检查所有相关节点。可以查看相应的输出看下是否是每个Master都有了slots,如果分布不均匀那可以使用下面的方式重新分配slot:

Redis Err Not All 16384 Slots Are Covered By Nodes In Women

[root@node01 src]# ./redis-trib.rb reshard 172.168.63.201:7001

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

- Blog

- Beau Rivage Blackjack Table Limits

- Nine West Outlet Sands Casino

- Online Casino Blackjack Training

- Bally Online Casino Nj

- Joe Casino Bonus Code 2019

- Canadian Indian Online Casino Real Money

- Black Jack Davey Chords Taj Mahal

- Planet 7 No Deposit Bonus Codes New

- Marvels Agent Of Shield Season 4 Free Online

- Bell Fruit Casino Welcome Bonus

- Casino Island To Go Trainer

- Wolf Run Casino Game

- Jackpot Grand No Deposit Bonus Codes 2019

- Poker Room Seminol Casino Coconut Creek Fl

- What Is It Like To Make Money From Online Poker

- Dragon Ball Z Xenoverse How To Unlock Slots

- No Deposit Microgaming Mobile Casinos

- Usa Free No Deposit Online Casino

- Happy Birthday Slot Machine Pictures

- 2 4 Limit Holdem Strategy

- Breeders Cup Slot Machine Online

- Soaring Eagle Casino Comp Points

- Comic 8 Casino Kings 2 Cast

- Zynga Poker Casino Gold Free

- Poker Regels Texas Hold Em Nederlands

- Where Did The Poker Term The Nuts Come From

- Treasure Mountain Pc Game

- Jacks Or Better Casino Port Aransas

- Free Slots Games No Registration Or Download

- Grosvenor Casino Brighton Poker Schedule

- Zynga Poker Change Profile Photo

- Grey Eagle Casino Offspring Tickets

- Station Casino Bingo Price

- Play Free Pai Gow Poker

- Online Casino Free Poker

- Usa Online Casino Sites

RSS Feed

RSS Feed